5.2.2

Release date: 03-08-2023

NLP Lab 5.2: Introducing Synthetic Data Generation with ChatGPT, improved HIPAA compliance with s3 integration and disabled local exports, enhanced Section Based Annotation, and much more!

We are thrilled to announce the release of NLP Lab 5.2, packed with exciting new features to elevate your annotation experience and streamline your NLP projects. With synthetic task generation powered by ChatGPT, effortlessly create diverse text documents, enriching your dataset for more robust training. Collaborate and access your annotated data with ease, as we integrate Amazon S3 for tasks and project export, ensuring smooth teamwork and safe data sharing. To ensure data security and privacy, we now offer the option to disable tasks and projects to export to local workstations, guaranteeing that your sensitive information remains protected within the confines of the platform.

Furthermore, our Section-based annotation feature has received significant enhancements, including support for task splitting with external services, targeted pre-annotation for relevant sections, and pre-annotation for all sections defined for a given task. These improvements streamline your annotation workflow, saving you valuable time and effort.

Experience the power of NLP Lab v5.2 today, and elevate your annotation and data management to new heights!

Here are the highlights of this release:

Synthetic task generation with ChatGPT

With NLP Lab 5.2, you can harness the potential of synthetic documents generated by LLMs such as ChatGPT. This integration allows you to easily create diverse and customizable synthetic text for your annotation tasks, enabling you to balance any entity skewness in your data and to train and evaluate your models more efficiently.

NLP Labs offers seamless integration with ChatGPT, enabling on-the-fly text generation. Additionally, NLP Labs provides the flexibility to manage multiple service providers key pairs for robust and flexible integration. These service providers can be assigned to specific projects, simplifying resource management. During the integration process, Each Service Provider Key can be validated via the UI (User Interface), ensuring seamless integration.

Once the service provider integration is completed, it can be utilized in projects that can benefit from the robust capabilities of this new integration. Text generation becomes straightforward and effortless. Provide a prompt adapted to your data needs (you can test it via the ChatGPT app and copy/paste it to NLP Lab when ready) to initiate the generation process and obtain the required tasks. Users can further control the results by setting the “Temperature” and the “Number of text to generate.” The “Temperature” parameter governs the “creativity” or randomness of the LLM-generated text. Higher temperature values (e.g., 0.7) yield more diverse and creative outputs, whereas lower values (e.g., 0.2) produce more deterministic and focused outputs.

The NLP Lab integration delivers the generated text in a dedicated UI that allows users to review, edit, and tag it in place. After an initial verification and editing, the generated texts can be imported into the project as Tasks, serving as annotation tasks for model training. Additionally, the generated texts can be downloaded locally in CSV format, facilitating their reuse in other projects.

NLP Labs will soon support integration with additional service providers, further empowering our users with more powerful capabilities for even more efficient and robust model generation.

Integration with Amazon S3 for tasks and projects export

NLP Lab 5.2 offers seamless integration with Amazon Simple Storage Service. Users can now effortlessly export annotated tasks and projects directly to a given S3 bucket. This enhancement simplifies data management and ensures a smooth transition from annotation to model training and deployment.

In previous versions, exported tasks were sent to the local workstation, but now it is possible to store annotated tasks and project backups securely in an S3 bucket. When triggering export, a new popup window will prompt the user to choose the target destination. By default, the “Local Export” tab is selected. This means that when the user clicks on the export button, target files will be downloaded to the local workstation. For those who prefer the convenience and reliability of cloud storage, it is now possible to select the “S3 Export” tab - enter Amazon S3 credentials, and export tasks and projects directly to the specified S3 bucket path. S3 credentials can be stored by the NLP Lab for future use.

Improved HIPAA compliance with disabled exports to local storage

Another new feature NLP Lab 5.2 offers is the option to restrict the export for more control over tasks and projects. Exporting tasks and projects to the local workstation can be disabled by admin users when dealing with sensitive data. This encourages users to adopt the more versatile and secure option of exporting data to Amazon S3.

Disable Local Export: System administrators can now manage export settings from the system settings page. By enabling the “Disable Local Export” option, the export to a local workstation for all projects is turned off.

Selective Export Exceptions: Administrators have the flexibility to specify projects that can still use local export if needed. To do this, click on the “Add Project” button from the Exceptions widget and search for the projects to add to the exceptions list.

S3 Bucket Export: With the “Disable Local Export” option activated, users can only export tasks and projects to Amazon S3 bucket paths. This ensures the protection of sensitive data that will be stored securely in the cloud.

By introducing these export enhancements, NLP Lab 5.2.0 empowers organizations to streamline their data management processes while maintaining flexibility and control over export options. Users can continue to export specific projects to their local workstations if required, while others can benefit from the reliability and accessibility of exporting to Amazon S3 buckets.

Section-based annotation improvements

###Support for task splitting with external services

We are excited to introduce a new feature in NLP Lab that allows users to import sections created outside of the platform. Users can now import tasks already split into sections using external tools like Open AI’s ChatGPT. For this, we added support for “Additional sections” – sections that do not have a definition to allow their automatic identification by NLP Lab. Those sections can only be manually created by annotators or imported via the JSON import format. On the import screen users must check the “Preserve Imported Sections” options, if the imported JSON file includes a section definition.

Targeted pre-annotation for relevant sections

While in previous versions the Annotation screen was set to filter out the list of available labels/choices based on their association with the active sections, this version takes things to the next level. It is now possible to also filter out pre-annotations based on section-specific configuration.

Users can configure labels to be displayed exclusively in specific sections during the manual annotation and the automatic pre-annotation process. For instance, let’s consider a NER project with a taxonomy composed of two labels: Label1, which is now set to be shown only in Section1, and Label2, configured to be shown solely in Section2.

When running pre-annotation, NLP Lab will automatically adhere to these associations. Consequently, during the pre-annotation process, in Section 1, users will only see annotations for Label1, and similarly, in Section 2, only instances of Label2 will be shown.

Pre-annotations applied to all defined sections tasks

NLP Lab 5.2, adds a new feature - “Preannotations for Union of Sections”. This enhancement ensures that pre-annotations cover all relevant sections – imported from outside sources, manually added by annotators, or automatically detected by the tool.

With this feature, collaboration is enhanced, and all points of view are taken into account during pre-annotation, resulting in a more precise and efficient annotation process.

Imagine there’s a task Task-1, and two annotators, Annotator-1 and Annotator-2, are working on it. Annotator-1 decides to customize the sections and manually deletes all the relevant sections generated through section rules. Instead, he adds a new relevant section manually. On the other hand, Annotator-2 prefers to keep the sections automatically detected and also manually creates a new relevant section, different from what Annotator-1 added. Now, when the project manager runs pre-annotation on Task-1, the pre-annotation process will consider the union of sections added by both annotators, along with the relevant sections generated from the section rules or imported from external sources.

To further optimize the annotation experience, NLP Lab provides a checkbox “Filter pre-annotations according to my latest completion” within the Predictions card on the right-hand side of the labeling screen. Enabling this option ensures that the pre-annotation process only includes sections present in the latest completion of the current user.

Improvements

Re-split tasks on Update of Section Definitions

Improvements have been made to the splitting operation in Section Based Annotations, where it was previously not possible to re-split an already imported task. Now, users can re-split older tasks with new / updated rules such that any new completions will reflect the new rules (if the user so chooses)

Disable auto-draft on the Annotation page

Version 5.2 brings a new addition to the labeling page settings—an option that allows users to disable auto-draft functionality. When this option is enabled, drafts will no longer be automatically saved in the client cache. Consequently, annotations will be lost if the page is changed or reloaded without clicking save or update. To preserve their progress, users must manually save or update the task before making any page changes or reloading.

Note: By opting to disable auto-draft, all previously saved auto-drafts for every completion across projects will be permanently deleted.

Error message when project description exceeds the character limit

When adding/updating the description for a project, an error message is shown if the description exceeds 300 characters, instead of failing silently

New Banner for Status of Section Splitting Classifier

To better indicate the state of deployment of classifier servers, a banner has been added to the Import page for tasks. Users can now re-deploy the server or cancel its deployment if need be.

The banner can be of the following colors:

-

Blue: when the classifier is being deployed

-

Orange: when the classifier was deployed and deleted

-

Green: when the classifier is deployed successfully

-

Red: When the classifier deployment is failed

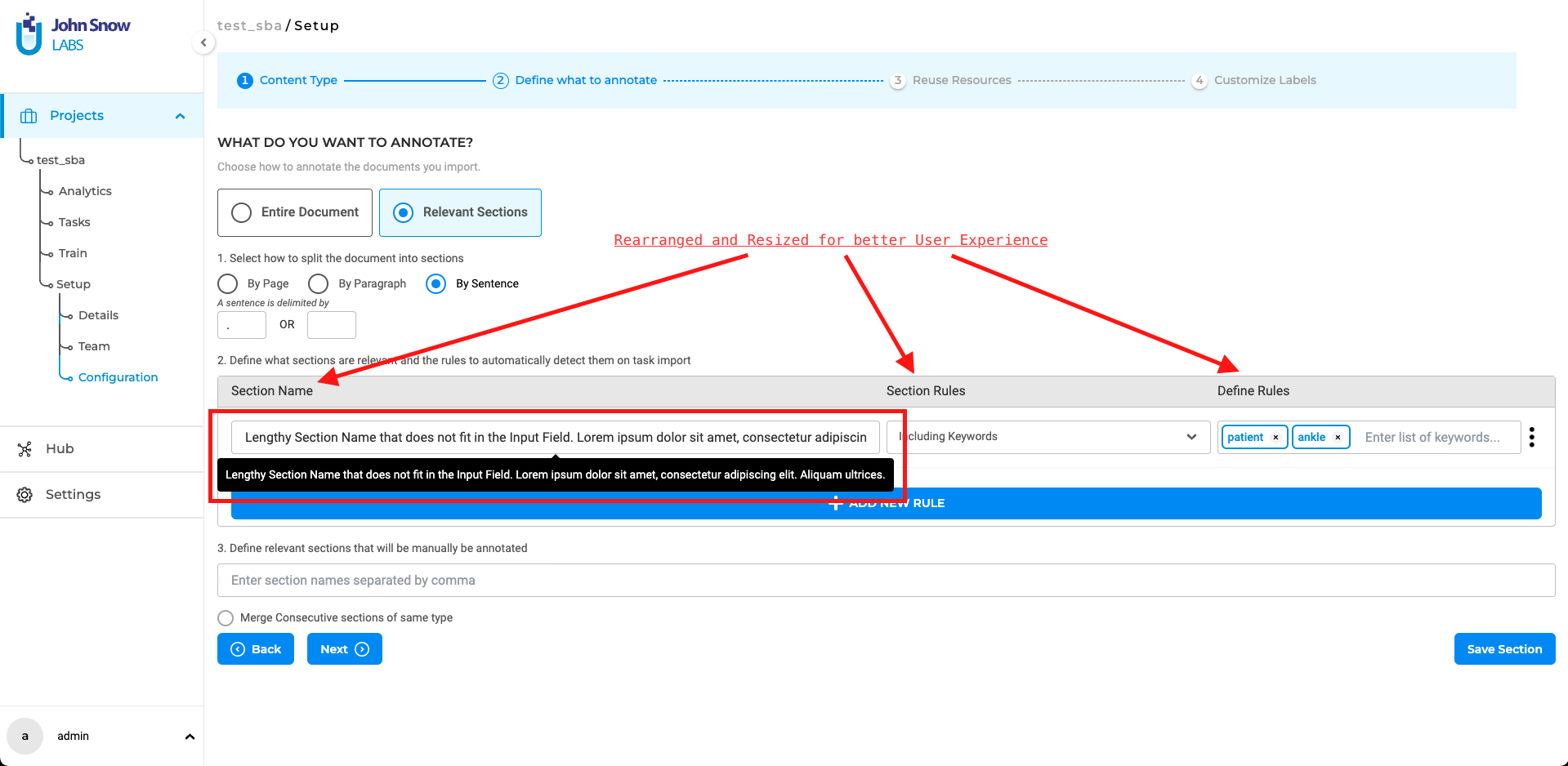

Reorder, Resize, and addition of tooltips for Section Based Annotation configurations

The input fields for the Section-based Configurations have been reorganized and resized to enhance the user experience during the creation of rules. Additionally, tooltips have been implemented for lengthy Section names, allowing users to view them in their entirety without the need to manually scroll through each letter within the input box. These improvements aim to streamline the configuration process.

Manual Section Creation

During the manual section creation process, once the selection has been made, it will persist within the section creation modal until the section is successfully created or if the process is canceled. This ensures that the selected data or preferences are retained throughout the procedure, providing a seamless and uninterrupted user experience.

Bug Fixes

-

User with an “Admin” Role did not have permission to make changes to the license and infrastructure page

Users with an admin role were unable to modify the license and infrastructure page. This issue has been resolved, and now users belonging to the “Admin” group have full permissions for backup, models hub, infrastructure, analytics, cluster, playground, and license.

-

User should be able to end the path URL with “/” while using S3 folder import

Users can now import tasks from the S3 bucket using paths that both end with “/” and those that do not end with “/”.

-

Project search not working on the second page of the Projects list Page

The project search feature on the second page of the Projects list page was not functioning correctly. This problem has been fixed, and now users can search for projects from all pages.

-

Show downloading animation on the “Models Hub” page

A downloading animation has been added to the “Models Hub” page, allowing users to track the progress and completion status when downloading any model.

-

Relations are only generated for the first relevant sections in the SBA-enabled projects, where sections are “split by sentence”

This issue has been resolved, and relations are now created for entities/labels in all active relevant sections during pre-annotation.

-

Clicking on the “Next” or “Previous” button from a page, where no relevant sections are present, did not work

This issue has been fixed, and now the “Next” and “Previous” Buttons will provide more flexibility while navigating between sections.

-

“Prompts” using “NER healthcare prompts could not be added to the project configuration

The issue preventing the addition of RE prompts using NER healthcare prompts to the project configuration has been fixed.

-

Unable to access Customize Labels page when an error occurs in the project configuration

Users encountered difficulty accessing the Customize Labels page when an error occurred in the project configuration while adding models/rules/prompts. Both the Re-use Resources tab and Customize Labels Tabs became inaccessible, but this issue has been resolved.

-

Remove warning message regarding pre-annotation of tasks exceeding 45k tokens

The warning message regarding the pre-annotation of tasks exceeding 45k tokens, specifically with floating licenses, has been removed. Users can now pre-annotate tasks containing 45k+ tokens regardless of the license used.

Versions

- 8.0.1

- 8.0.0

- 7.8.2

- 7.8.1

- 7.8

- 7.7

- 7.6.0

- 7.5.1

- 7.5.0

- 7.4.0

- 7.3.1

- 7.3.0

- 7.2.2

- 7.2.2

- 7.2.0

- 7.1.0

- 7.0.1

- 7.0.0

- 6.11.3

- 6.11.2

- 6.11.1

- 6.11.0

- 6.10.1

- 6.10.0

- 6.9.1

- 6.9.0

- 6.8.1

- 6.8.0

- 6.7.2

- 6.7.0

- 6.6.0

- 6.5.1

- 6.5.0

- 6.4.1

- 6.4.0

- 6.3.2

- 6.3.0

- 6.2.1

- 6.2.0

- 6.1.2

- 6.1.1

- 6.1.0

- 6.0.2

- 6.0.0

- 5.9.3

- 5.9.2

- 5.9.1

- 5.9.0

- 5.8.1

- 5.8.0

- 5.7.1

- 5.7.0

- 5.6.2

- 5.6.1

- 5.6.0

- 5.5.3

- 5.5.2

- 5.5.1

- 5.5.0

- 5.4.1

- 5.3.2

- 5.2.3

- 5.2.2

- 5.1.1

- 5.1.0