The Playground feature of the Generative AI Lab allows users to deploy and test models, rules, and/or prompts without going through the project setup wizard. This simplifies the initial resources exploration, and facilitates experiments on custom data. Any model, rule, or prompt can now be selected and deployed for testing by clicking on the “Open in Playground” button.

Experiment with Rules

Rules can be deployed to the Playground from the rules page. When a particular rule is deployed in the playground, the user can also change the parameters of the rules via the rule definition form from the right side of the page. After saving the changes users need to click on the “Deploy” button to refresh the results of the pre-annotation on the provided text.

Experiment with Prompts

Generative AI Lab’s Playground also supports the deployment and testing of prompts. Users can quickly test the results of applying a prompt on custom text, can easily edit the prompt, save it, and deploy it right away to see the change in the pre-annotation results.

Experiment with Models

Any Classification, NER or Assertion Status model available on the Generative AI Lab can also be deployed to Playground for testing on custom text.

Deployment of models and rules is supported by floating and air-gapped licenses. Healthcare, Legal, and Finance models require a license with their respective scopes to be deployed in Playground. Unlike pre-annotation servers, only one playground can be deployed at any given time.

Direct Navigation to Active Playground Sessions

Navigating between multiple projects to and from the playground experiments can be necessary, especially when you want to revisit a previously edited prompt or rule. This is why Generative AI Lab Playground now allow users to navigate to any active Playground session without having to redeploy the server. This feature enables users to check how their resources (models, rules and prompts) behave at project level, compare the preannotation results with ground truth, and quickly get back to experiments for modifying prompts or rules without losing progress or spending time on new deployments. This feature makes experimenting with NLP prompts and rules in a playground more efficient, streamlined, and productive.

Automatic Deployment of Updated Rules/Prompts

Another benefit of experimenting with NLP prompts and rules in the playground is the immediate feedback that you receive. When you make changes to the parameters of your rules or to the questions in your prompts, the updates are deployed instantly. Manually deploying the server is not necessary any more for changes made to Rules/Prompts to be reflected in the preannotation results. Once the changes are saved, by simply clicking on the Test button, updated results are presented. This allows you to experiment with a range of variables and see how each one affects the correctness and completeness of the results. The real-time feedback and immediate deployment of changes in the playground make it a powerful tool for pushing the boundaries of what is possible with language processing.

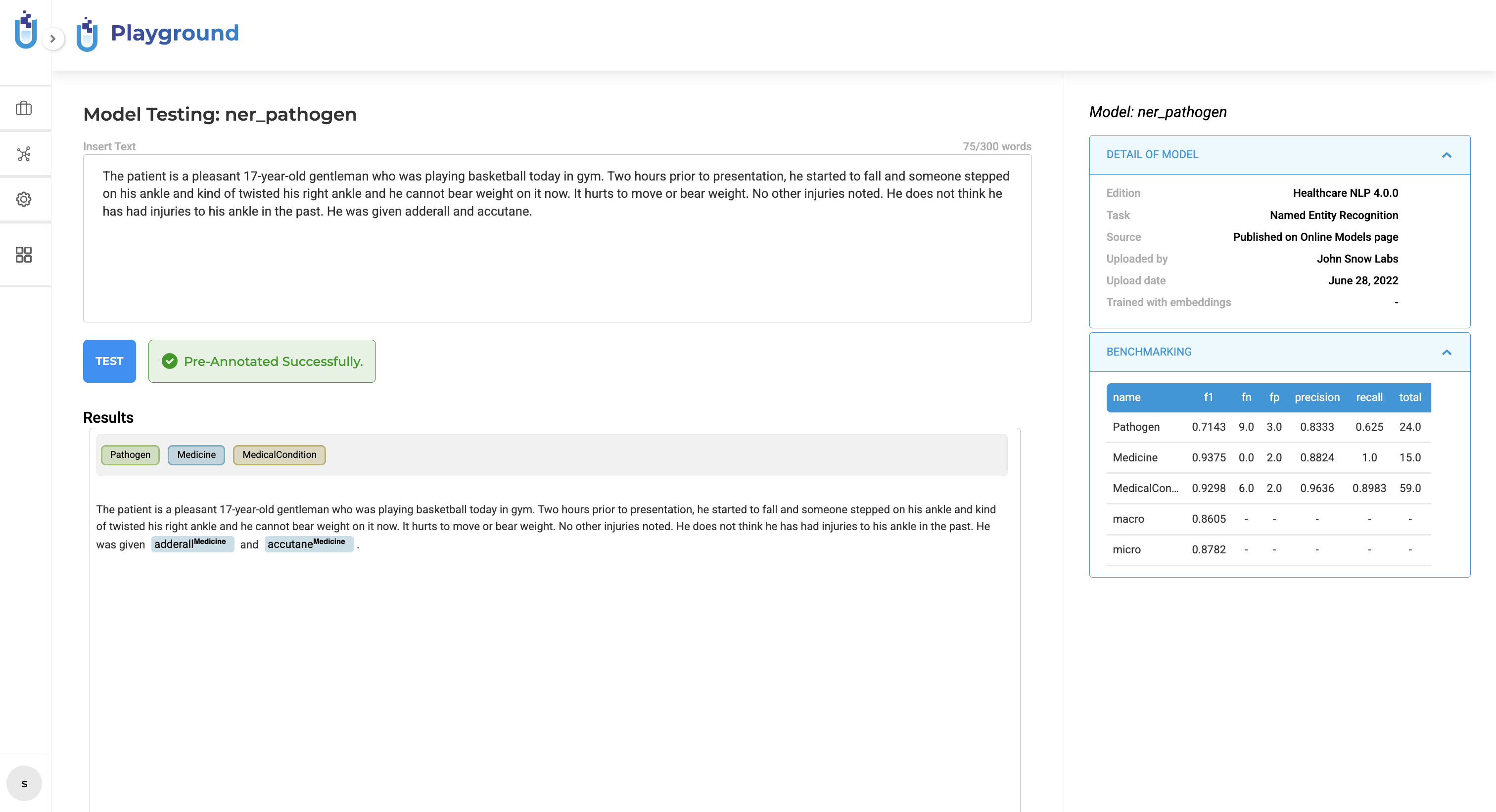

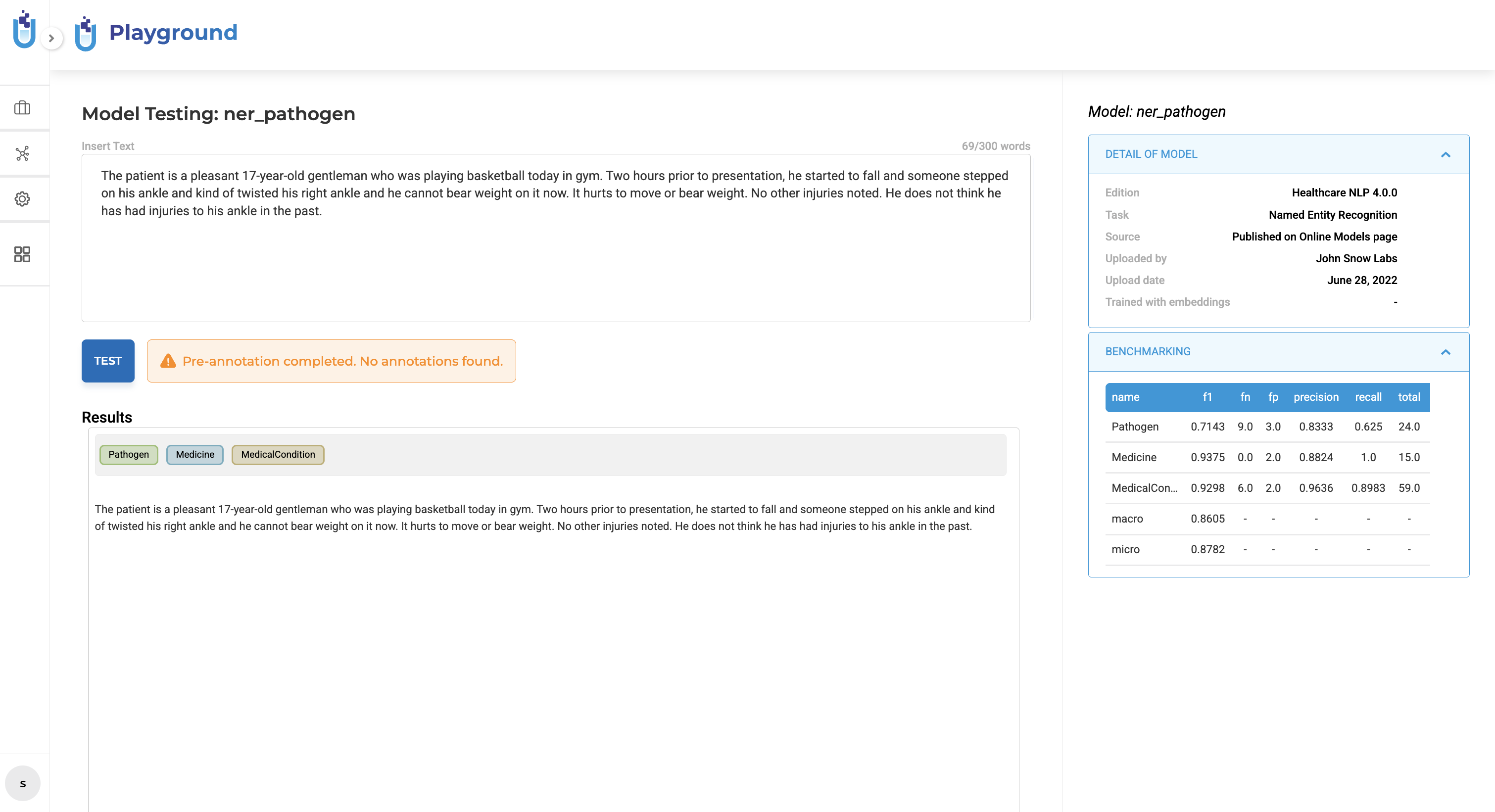

Clear Outcome Notifications for Playground Tests

To improve transparency and usability during experimentation, the Playground provides explicit outcome notifications when a pre-annotation test completes. After running a test, the system evaluates the execution result and displays a contextual status message using standardized visual indicators:

A green success notification appears when pre-annotation completes and produces results. A gray informational notification appears when pre-annotation completes successfully but no results are generated. A red failure notification appears when the pre-annotation process fails.

These notifications are displayed immediately upon completion and do not modify the existing pre-annotation workflow or configuration.

Playground Server Destroyed after 5 Minutes of Inactivity

When active, the NLP playground consumes resources from your server. For this reason, Generative AI Lab defines an idle time limit of 5 minutes after which the playground is automatically destroyed. This is done to ensure that the server resources are not being wasted on idle sessions. When the server is destroyed, a message is displayed, so users are aware that the session has ended. Users can view information regarding the reason for the Playground’s termination, and have the option to restart by pressing the Restart button.

Playground Servers use Light Pipelines

The replacement of regular preannotation pipelines with Light Pipelines has a significant impact on the performance of the NLP playground. Light pipelines allow for faster initial deployment, quicker pipeline update and fast processing of text data, resulting in overall quicker results in the UI.

Direct Access to Model Details Page on the Playground

Another useful feature of Generative AI Lab Playground is the ability to quickly and easily access information on the models being used. This information can be invaluable for users who are trying to gain a deeper understanding of the model’s inner workings and capabilities. In particular, by click on the model’s name it is now possible to navigate to the NLP Models hub page. This page provides users with additional details about the model, including its training data, architecture, and performance metrics. By exploring this information, users can gain a better understanding of the model’s strengths and weaknesses, and use this knowledge to make more informed decisions on how good the model is for the data they need to annotate.