To install the johnsnowlabs Python library and all of John Snow Labs open source libraries, just run

pip install johnsnowlabs

This installs Spark-NLP, NLU , Spark-NLP-Display , Pyspark and other open source sub-dependencies.

To quickly test the installation, you can run in your Shell:

python -c "from johnsnowlabs import *;print(nlu.load('emotion').predict('Wow that easy!'))"

or in Python:

from johnsnowlabs import *

nlp.load('emotion').predict('Wow that easy!')

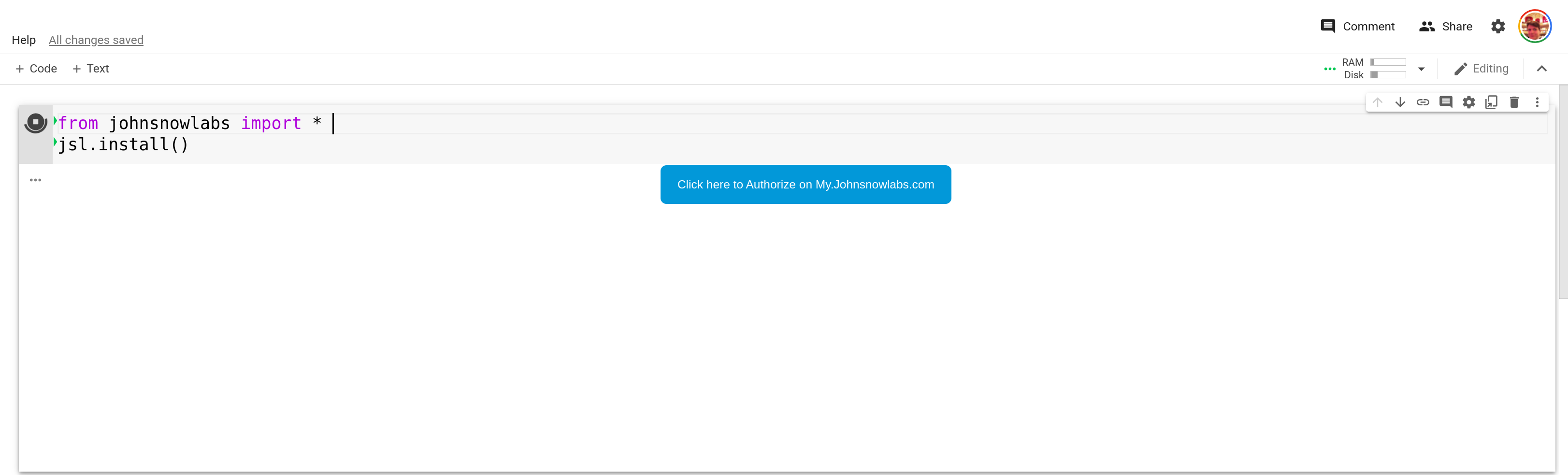

The quickest way to get access to licensed libraries like Finance NLP, Legal NLP, Healthcare NLP or Visual NLP is to run the following in python:

from johnsnowlabs import *

nlp.install()

This will display a Browser Window Pop Up or show a Clickable Button with Pop Up.

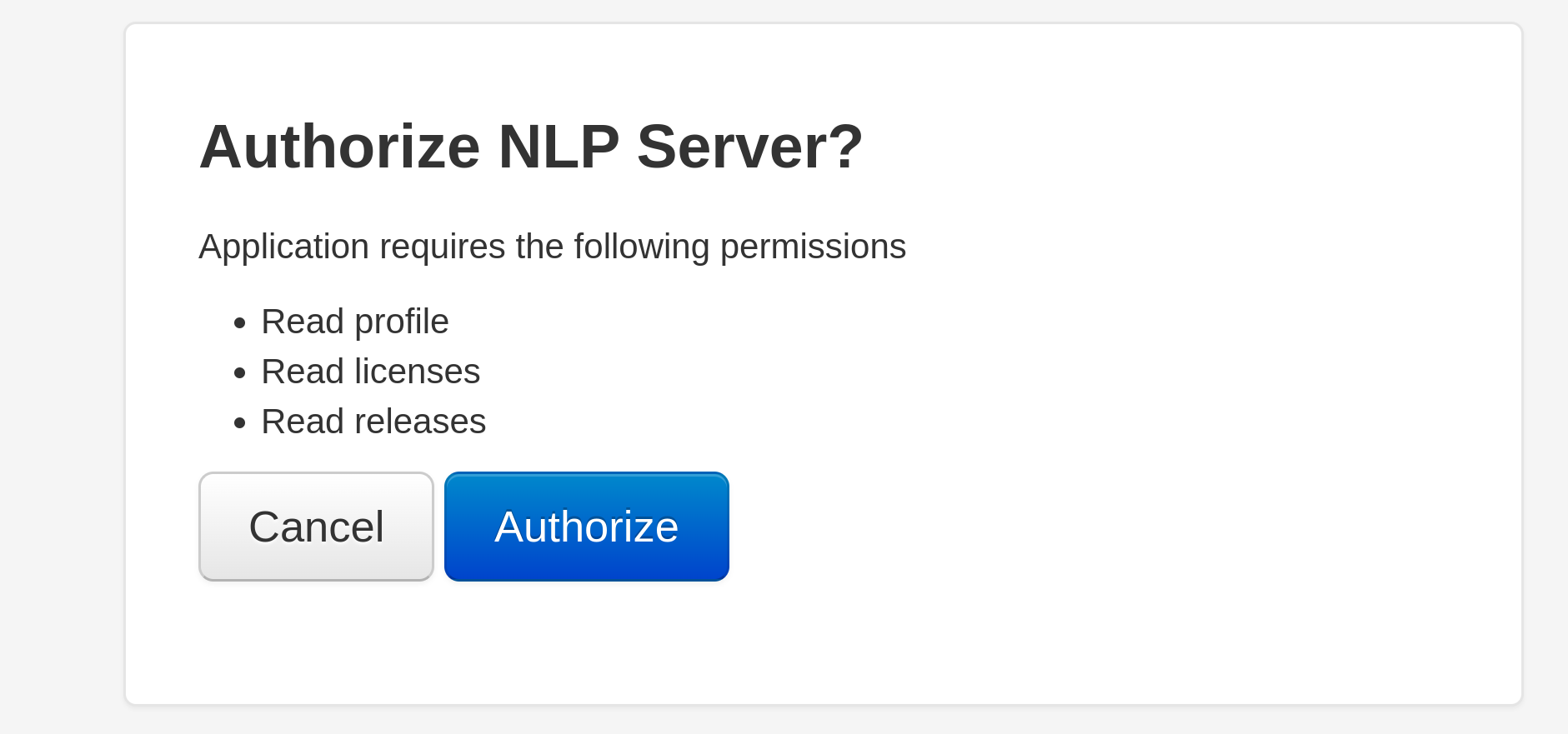

Click on the Authorize button to allow the library to connect to your account on my.JohnSnowLabs.com and access you licenses.

This will enable the installation and use of all licensed products for which you have a valid license.

Make sure to Restart your Notebook after installation.

Colab Button

Where the Pop-Up leads you to:

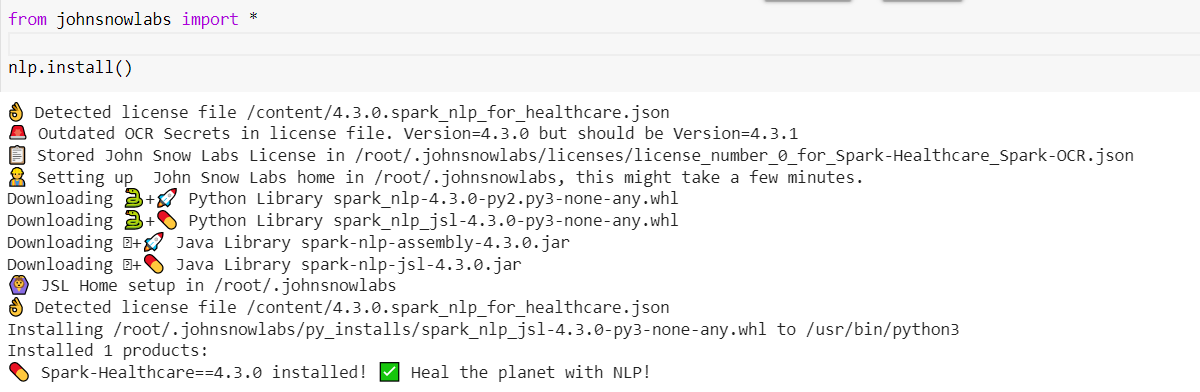

After clicking Authorize:

Additional Requirements

- Make sure you have

Java 8installed, for setup instructions see How to install Java 8 for Windows/Linux/Mac? - Windows Users must additionally follow every step precisely defined in How to correctly install Spark NLP for Windows?

Install Licensed Libraries

The following is a more detailed overview of the alternative installation methods and parameters you can use.

The parameters of nlp.install()parameters fall into 3 categories:

- Authorization Flow Choice & Auth Flow Tweaks

- Installation Targets such as

Airgap Offline,Databricks,new Pytho Venv,Currently running Python Enviroment, ortarget Python Environment - Installation process tweaks

List all of your accessible Licenses

You can use nlp.list_remote_licenses() to list all available licenses in your my.johnsnowlabs.com/ account

and nlp.list_local_licenses() to list all locally cached licenses.

Authorization Flows overview

The johnsnowlabs library gives you multiple methods to authorize and provide your license when installing licensed

libraries.

Once access to your license is provided, it is cached locally ~/.johnsnowlabs/licenses and re-used when

calling nlp.start() and nlp.install(), so you don’t need to authorize again.

Only 1 licenses can be provided and will be cached during authorization flows.

If you have multiple licenses you can re-run an authorization method and use the local_license_number and remote_license_number parameter choose

between licenses you have access to.

Licenses are locally numbered in order they have been provided, for more info see License Caching.

| Auth Flow Method | Description | Python nlp.install() usage |

|---|---|---|

| Browser Based Login (OAuth) Localhost | Browser window will pop up, where you can give access to your license. Use remote_license_number parameter to choose between licenses. Use remote_license_number parameter to choose between licenses |

nlp.install() |

| Browser Based Login (OAuth) on Google Colab | A button is displayed in your notebook, click it and visit new page to give access to your license. Use remote_license_number parameter to choose between licenses |

nlp.install() |

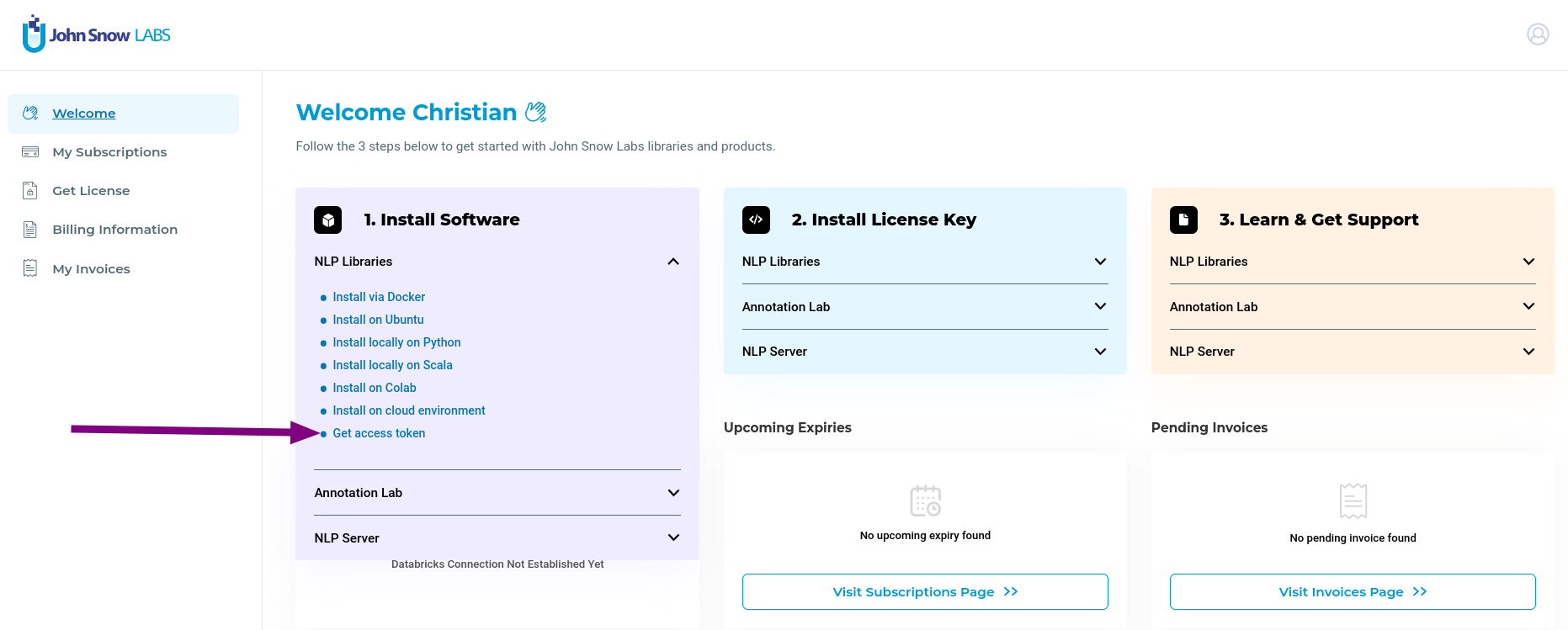

| Access Token | Vist my.johnsnowlabs.com to extract a token which you can provide to enable license access. See Access Token Example for more details | nlp.install(access_token=my_token) |

| License JSON file path | Define JSON license file with keys defined by License Variable Overview and provide file path | nlp.install(json_license_path=path) |

Auto-Detect License JSON file from os.getcwd() |

os.getcwd() directory is scanned for a .json file containing license keys defined by License Variable Overview |

nlp.install() |

| Auto-Detect OS Environment Variables | Environment Variables are scanned for license variables defined by License Variable Overview | nlp.install() |

Auto-Detect Cached License in ~/.johnsnowlabs/licenses |

If you already have provided a license previously, it is cached in ~/.johnsnowlabs/licenses and automatically loaded.Use local_license_number parameter to choose between licenses if you have multiple |

nlp.install() |

| Manually specify license data | Set each license value as python parameter, defined by License Variable Overview | nlp.install(hc_license=hc_license enterprise_nlp_secret=enterprise_nlp_secret ocr_secret=ocr_secret ocr_license=ocr_license aws_access_key=aws_access_key aws_key_id=aws_key_id) |

Optional Auth Flow Parameters

Use these parameters to configure the preferred authorization flow.

| Parameter | description |

|---|---|

browser_login |

Enable or disable browser based login and pop up if no license is provided or automatically detected. Defaults to True. |

force_browser_login |

If a cached license if found, no browser pop up occurs. Set True to force the browser pop up, so that you can download different license, if you have several ones. |

local_license_number |

Specify the license number when loading a cached license from jsl home and multiple licenses have been cached. Defaults to 0 which will use the very first license every provided to the johnsnowlabs library. |

remote_license_number |

Specify the license number to use with OAuth based approaches. Defaults to 0 which will use your first license from my.johnsnowlabs.com. |

store_in_jsl_home |

By default license data and Jars/Wheels are stored in JSL home directory. This enables nlp.start() and nlp.install() to re-use your information and you don’t have to specify on every run.Set to False to disable this caching behaviour. |

only_refresh_credentials |

Set to True if you don’t want to install anything and just need to refresh or index a new license. Defaults to False. |

Optional Installation Target Parameters

Use these parameters to configure where to install the libraries.

| Parameter | description |

|---|---|

python_exec_path |

Specify path to a python executable into whose environment the libraries will be installed. Defaults to the current executing Python process, i.e. sys.executable and it’s pip module is used for setup. |

venv_creation_path |

Specify path to a folder, in which a fresh venv will be created with all libraries. Using this parameter ignores the python_exec_path parameter, since the newly created venv’s python executable is used for setup. |

offline_zip_dir |

Specify path to a folder in which 3 sub-folders are created, py_installs, java_installs with corrosponding Wheels/Jars/Tars and licenses. It will additionallly be zipped. |

Install to Databricks with access Token |

See Databricks Documentation for extracting a token which you can provide to databricks access, see Databricks Install Section for more details. |

Optional Installation Process Parameters

Use the following parameters to configure what should be installed.

| Parameter | description |

|---|---|

install_optional |

By default install all open source libraries if missing. Set the False to disable. |

install_licensed |

By default installs all licensed libraries you have access to if they are missing. Set to False to disable. |

include_dependencies |

Defaults to True which installs all depeendencies. If set to False pip will be executed with the --no-deps argument under the hood. |

product |

Specify product to install. By default installs everything you have access to. |

only_download_jars |

By default all libraries are installed to the current environment via pip. Set to False to disable installing Python dependencies and only download jars to the John Snow Labs home directory. |

hardware_target |

Specify hardware install type, either cpu, gpu, apple_silicon, or aarch . Defaults to cpu. If you have a GPU and want to leverage CUDA, set gpu. If you are an Apple M1 or Arch user choose the corresponding types. |

py_install_type |

Specify Python installation type to use, either tar.gz or whl, defaults to whl. |

refresh_install |

Delete any cached files before installing by removing John Snow Labs home folder. This will delete your locally cached licenses. |

Automatic Databricks Installation

Use any of the databricks auth flows to enable the johnsnowlabs library to automatically install

all open source and licensed features into a Databricks cluster.

You additionally must use one of the John Snow Labs License Authorization Flows to give access to your John Snow

Labs license,which will be installed to your Databricks cluster.

A John Snow Labs Home directory is constructed in the distributed Databricks File System/dbfs/johnsnowlabs which has

all Jars, Wheels and License Information to run all features in a Databricks cluster.

| Databricks Auth Flow Method | Description | Python nlp.install() usage |

|---|---|---|

Access Token |

See Databricks Documentation for extracting a token which you can provide to databricks access, see Databricks Install Section for details | nlp.install(databricks_cluster_id=my_cluster_id, databricks_host=my_databricks_host, databricks_token=my_access_databricks_token) |

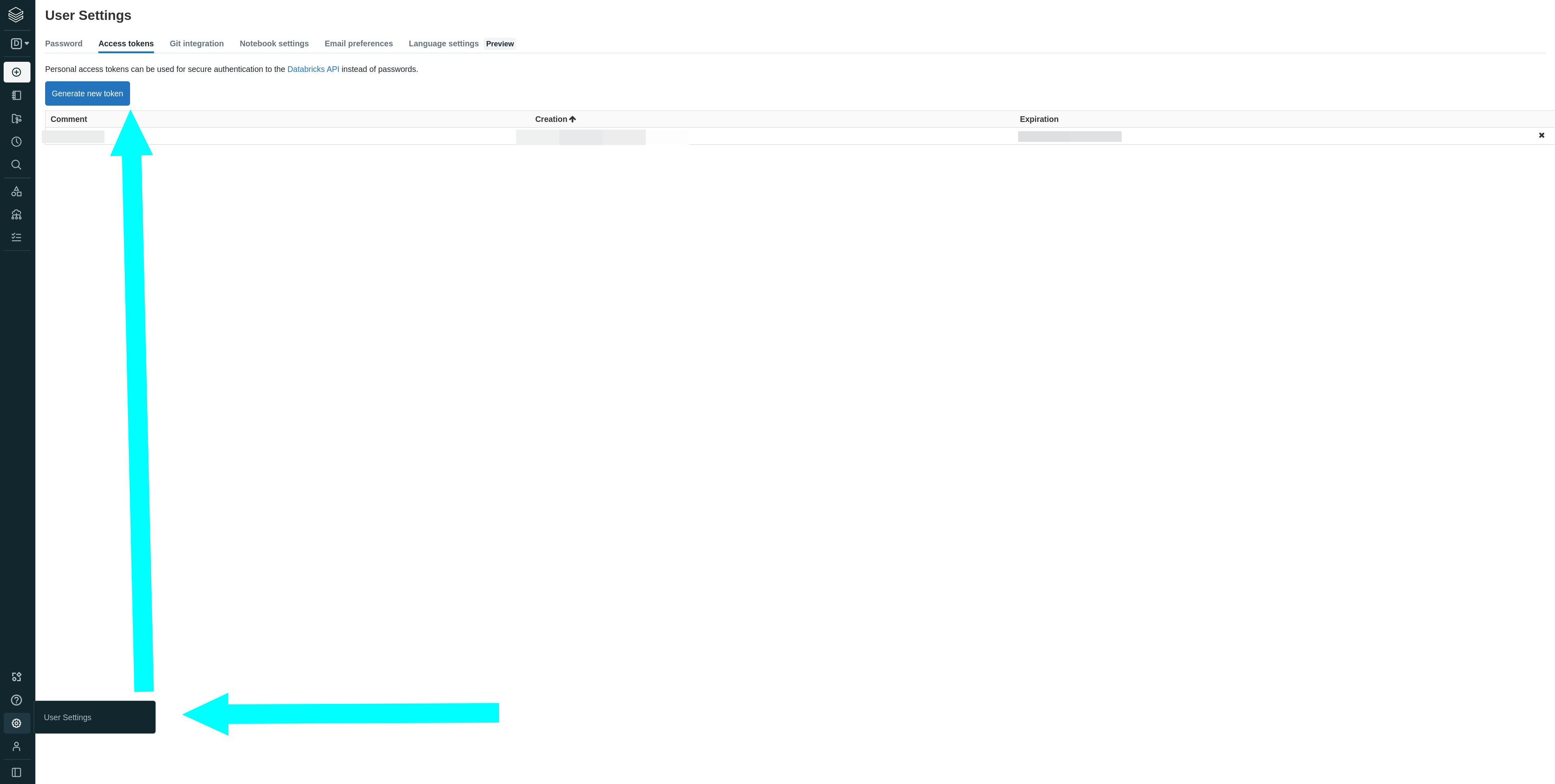

Where to find your Databricks Access Token:

Databricks Cluster Creation Parameters

You can set the following parameters on the nlp.install() function to define properties of the cluster which will be created.

See Databricks Cluster Creation for a detailed description of all parameters.

You can use the extra_pip_installs parameter to installl a list of additional pypi libraries to the cluster.

Just set nlp.install_to_databricks(extra_pip_installs=['langchain','farm-haystack==1.2.3']) to install the libraries.

| Cluster creation Parameter | Default Value |

|---|---|

| extra_pip_installs | None |

| block_till_cluster_ready | True |

| num_workers | 1 |

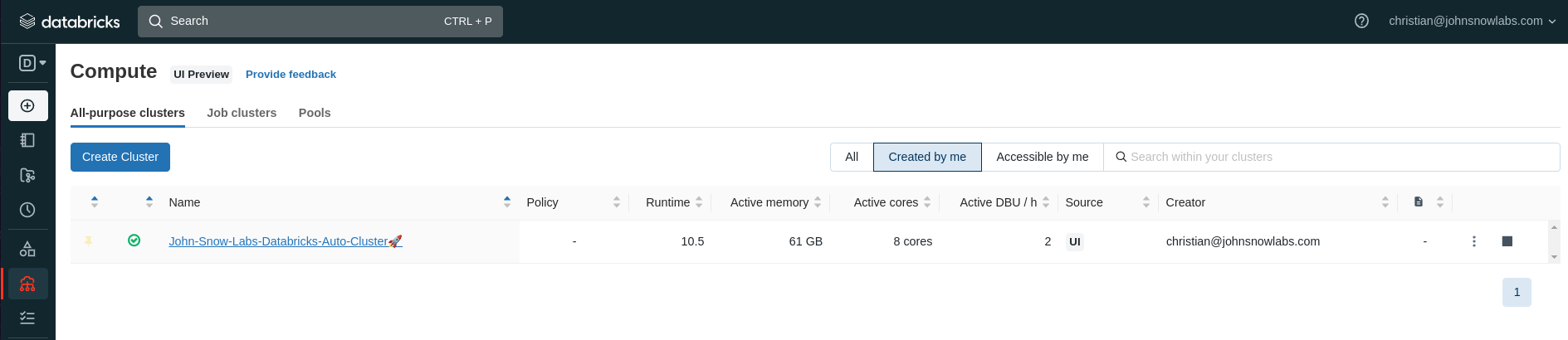

| cluster_name | John-Snow-Labs-Databricks-Auto-Cluster🚀 |

| node_type_id | i3.xlarge |

| driver_node_type_id | i3.xlarge |

| spark_env_vars | None |

| autotermination_minutes | 60 |

| spark_version | 10.5.x-scala2.12 |

| spark_conf | None |

| auto_scale | None |

| aws_attributes | None |

| ssh_public_keys | None |

| custom_tags | None |

| cluster_log_conf | None |

| enable_elastic_disk | None |

| cluster_source | None |

| instance_pool_id | None |

| headers | None |

The created cluster

License Variables Names for JSON and OS variables

The following variable names are checked when using a JSON or environment variables based approach for installing

licensed features or when using nlp.start() .

You can find all of your license information on https://my.johnsnowlabs.com/subscriptions

AWS_ACCESS_KEY_ID: Assigned to you by John Snow Labs. Must be defined.AWS_SECRET_ACCESS_KEY: Assigned to you by John Snow Labs. Must be defined.HC_SECRET: The secret for a version of the enterprise NLP engine library. Changes between releases. Can be omitted if you don’t have access to enterprise nlp.HC_LICENSE: Your license for the medical features. Can be omitted if you don’t have a medical license.OCR_SECRET: The secret for a version of the Visual NLP (Spark OCR) library. Changes between releases. Can be omitted if you don’t have a Visual NLP (Spark OCR) license.OCR_LICENSE: Your license for the Visual NLP (Spark OCR) features. Can be omitted if you don’t have a Visual NLP (Spark OCR) license.JSL_LEGAL_LICENSE: Your license for Legal NLP FeaturesJSL_FINANCE_LICENSEYour license for Finance NLP Features

NOTE: Instead of JSL_LEGAL_LICENSE, HC_LICENSE and JSL_FINANCE_LICENSE you may have 1 generic SPARK_NLP_LICENSE.

Installation Examples

Via Auto Detection & Browser Login

All default search locations are searched, if any credentials are found they will be used. If no credentials are auto-detected, a Browser Window will pop up, asking to authorize access to https://my.johnsnowlabs.com/ In Google Colab, a clickable button will appear, which will make a window pop up where you can authorize access to https://my.johnsnowlabs.com/.

nlp.install()

Via Access Token

Get your License Token from My John Snow Labs

nlp.install(access_token='secret')

Where you find the license

Via Json Secrets file

Path to a JSON containing secrets, see License Variable Names for more details.

nlp.install(json_file_path='my/secret.json')

Via Manually defining Secrets

Manually specify all secrets. Some of these can be omitted, see License Variable Names for more details.

nlp.install(

hc_license='Your HC License',

fin_license='Your FIN License',

leg_license='Your LEG License',

enterprise_nlp_secret='Your NLP Secret',

ocr_secret='Your OCR Secret',

ocr_license='Your OCR License',

aws_access_key='Your Access Key',

aws_key_id='Your Key ID',

)

Into Current Python Process

Uses sys.executable by default, i.e. the Python that is currently running the program.

nlp.install()

Into Custom Python Env

Using specific python executable, which is not the currently running python. Will use the provided python’s executable pip module to install libraries.

nlp.install(python_exec_path='my/python.exe')

Into freshly created venv

Create a new Venv using the currently executing Pythons Venv Module.

nlp.install(venv_creation_path='path/to/where/my/new/venv/will/be')

Into Airgap/Offline Installation (Automatic)

Create a Zip with all Jars/Wheels/Licenses you need to run all libraries in an offline environment. Step1:

nlp.install(offline_zip_dir='path/to/where/my/zip/will/be')

Step2:

Transfer the zip file securely to your offline environment and unzip it. One option is the unix scp command.

scp /to/where/my/zip/will/be/john_snow_labs.zip 123.145.231.001:443/remote/directroy

Step3: Then from the remote machine shell unzip with:

# Unzip all files to ~/johnsowlabs

unzip remote/directory/jsl.zip -d ~/johnsowlabs

Step4 (option1): Install the wheels via jsl:

# If you unzipped to ~/johnsowlabs, then just update this setting before running and nlp.install() handles the rest for you!

from johnsnowlabs import *

nlp.settings.jsl_root = '~/johnsowlabs'

# Make sure you have Java 8 installed!

nlp.install()

Step4 (option2): Install the wheels via pip:

# Assuming you unzipped to ~/johnsnowlabs, you can install all wheels like this

pip install ~/johnsnowlabs/py_installs/*.whl

Step5: Test your installation Via shell:

python -c "from johnsnowlabs import *;print(nlu.load('emotion').predict('Wow that easy!'))"

or in Python:

from johnsnowlabs import *

nlp.load('emotion').predict('Wow that easy!')

Into Airgap/Offline Manual

Download all files yourself from the URLs printed by nlp.install(). You will have to folly the Automatic Instructions starting from step (2) of the automatic installation. I.e. provide the files somehow on your offline machine.

# Print all URLs to files you need to provide on your host machine

nlp.install(offline=True)

Into a freshly created Databricks cluster automatically

To install in databricks you must provide your accessToken and hostUrl.

You can provide the secrets to the install function with any of the methods listed above, i.e. using access_token

, browser, json_file, or manually defining secrets

Your can get it from:

# Create a new Cluster with Spark NLP and all licensed libraries ready to go:

nlp.install_to_databricks(databricks_host='https://your_host.cloud.databricks.com', databricks_token = 'dbapi_token123',)

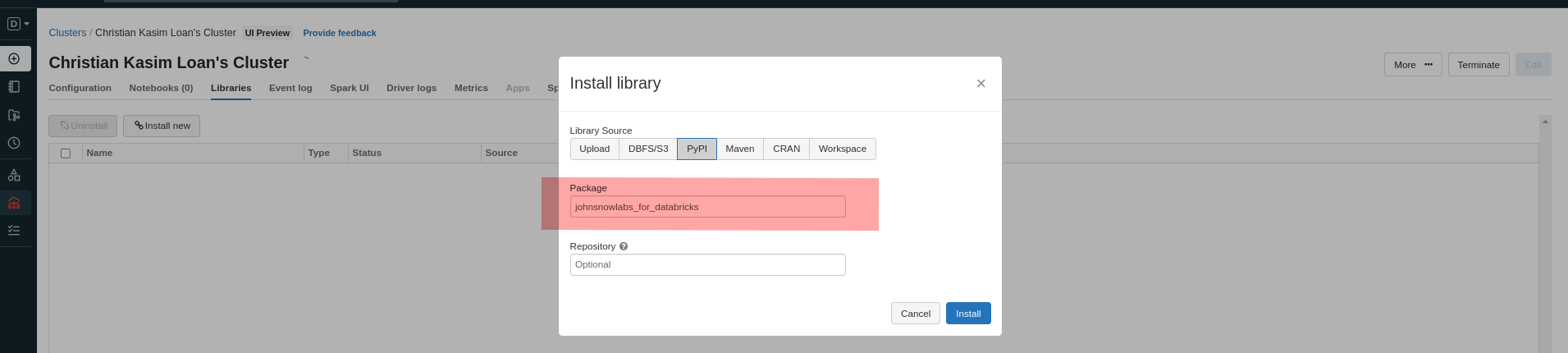

Into Existing Databricks cluster Manual

If you do not wish to use the recommended automatic installation but instead want to install manually

you must install the johnsnowlabs_for_databricks pypi package instead of johnsnowlabs via the UI or any method of your choice.

License Management & Caching

Storage of License Data and License Search behaviour

The John Snow Labs library caches license data in ~/.johnsnowlabs/licenses whenever a new one is provided.

After having provided license data once, you don’t need to specify it again since the cached licensed will be used.

Use the local_license_number and remote_license_number parameters to switch between multiple licenses.

Note: Locally cached licenses are numbered in the order they have been provided, starting at 0.

remote_license_number=0 might not be the same as local_license_number=0.

Use the following functions to see all your avaiable licenses.

List all available licenses

This shows you all licenses for your account in https://my.johnsnowlabs.com/.

Use this to decide which license number to install when installing via browser or access token.

nlp.list_remote_licenses()

List all locally cached licenses

Use this to decide which license number to use when using nlp.start() or nlp.install() to specify which local license you want to load.

nlp.list_local_licenses()

License Search precedence

If there are multiples possible sources for licenses, the following order takes precedence:

- Manually provided license data by defining all license parameters.

- Browser/ Access Token.

Os environment Variablesfor any var names that match up with secret names./content/*.jsonfor any json file smaller than 1 MB.current_working_dir/*.jsonfor any json smaller than 1 MB.~/.johnsnowlabs/licensesfor any licenses.

JSON files are scanned if they have any keys that match up with names of secrets.

Name of the json file does not matter, file just needs to end with .json.

Upgrade Flow

Step 1: Upgrade the johnsnowlabs library.

pip install johnsnowlabs --upgrade

Step 2: Run install again, while using one Authorization Flows.

nlp.install()

The John Snow Labs Teams are working early to push out new Releases and Features each week!

Simply run pip install johnsnowlabs --upgrade to get the latest open source libraries updated.

Once the johnsnowlabs library is upgraded, it will detect any out-dated libraries any inform you

that you can upgrade them by running nlp.install() again.

You must run one of the Authorization Flows again,

to gian access to the latest enterprise libraries.

Next Steps & Frequently Asked Questions

How to setup Java 8

Join our Slack channel

Join our channel, to ask for help and share your feedback. Developers and users can help each other get started here.

Where to go next

If you want to get your hands dirty with any of the features check out the NLU examples page, or Licensed Annotators Overview Detailed information about Johnsnowlabs Libraries APIs, concepts, components and more can be found on the following pages :